合作机构:阿里云 / 腾讯云 / 亚马逊云 / DreamHost / NameSilo / INWX / GODADDY / 百度统计

资讯热度排行榜

- 203121云服务器有哪些维护技巧,你

- 197972网站打开速度慢的代价:超过

- 190113域名备案需要准备什么?

- 171694云服务器小常识

- 167225云服务器和云盘的区别

- 165036个人如何注册域名?

- 164777大数据和云计算的发展前景如

- 102608MySQL体系架构

- 86239河南服务器托管哪家好?

- 503210推荐一些真正便宜的企业云服

推荐阅读

- 01-071什么是Helm?它是如何提升云原

- 01-1022024年云计算的四大趋势

- 01-123到2026年,边缘计算支出将达到

- 01-174混合云的力量实际上意味着什么?

- 01-195白话Kubernetes网络

- 01-226从集中式到分布式:云应用管理的未

- 01-237大技术时代的网络转型

- 01-248企业转型:虚拟化对云计算的影响

- 01-259到2028年,云计算市场将达到1

- 01-2510云应用管理的未来:分布式云环境

聊聊 Kube-Apiserver 内存优化进阶

原理

内存优化是一个经典问题,在看具体 K8S 做了哪些工作之前,可以先抽象一些这个过程,思考一下如果是我们的话,会如何来优化。这个过程可以简单抽象为外部并发请求从服务端获取数据,如何在不影响吞吐的前提下降低服务端内存消耗?一般有几种方式:

- 缓存序列化的结果

- 优化序列化过程内存分配

数据压缩在这个场景可能不适用,压缩确实可以降低网络传输带宽,从而提升请求响应速度,但对服务端内存的优化没有太大的作用。kube-apiserver 已经支持基于 gzip 的数据压缩,只需要设置 Accept-Encoding 为 gzip 即可,详情可以参考官网[1]介绍。

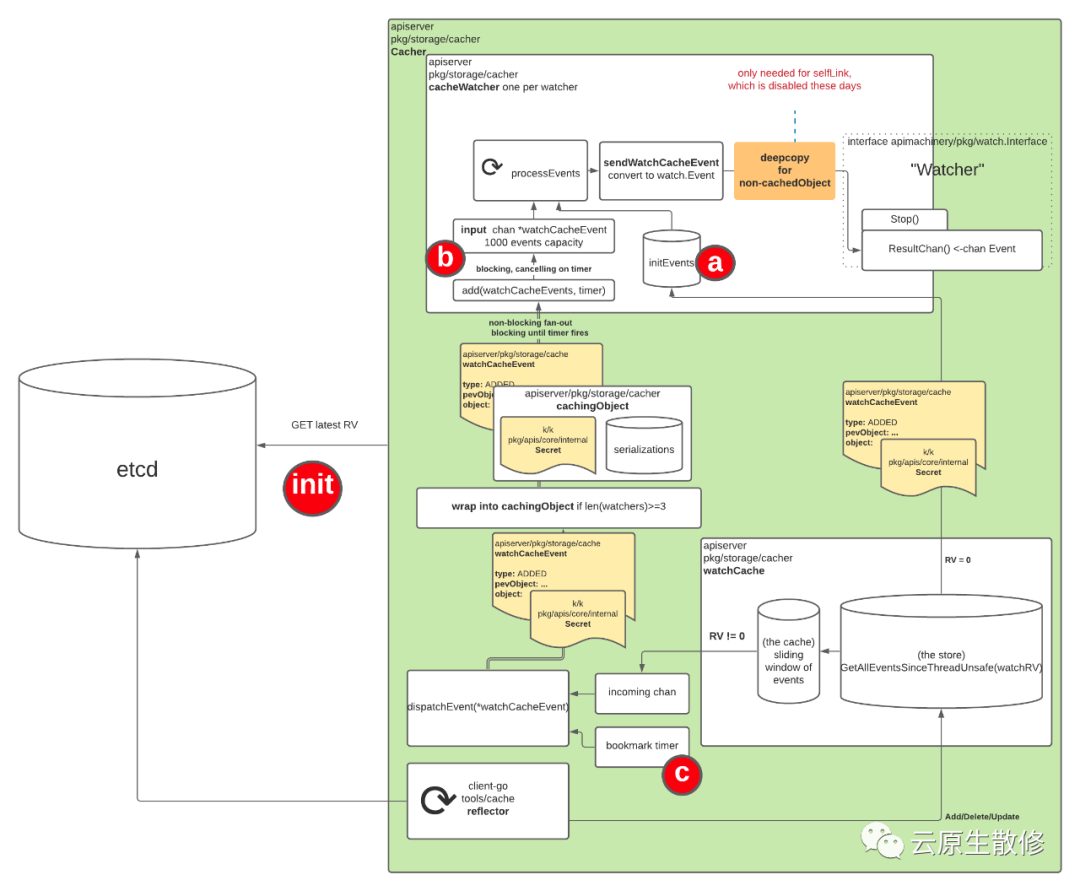

当然缓存序列化的结果适用于客户端请求较多的场景,尤其是服务端需要同时把数据发送给多个客户时,缓存序列化的结果收益会比较明显,因为只需要一次序列化的过程即可,只要完成一次序列化,后续给其他客户端直接发送数据时直接使用之前的结果即可,省去了不必要的 CPU 和内存的开销。当然缓存序列化的结果这个操作本身来说也是会占用一些内存的,如果客户端数量较少,那么这个操作可能收益不大甚至可能带来额外的内存消耗。kube-apiserver watch 请求就与这个场景非常吻合。

下文会就 kube-apiserver 中是如何就这两点进行的优化做一个介绍。

实现

下文列出的时间线中的各种问题和优化可能而且有很大可能只是众多问题和优化中的一部分。

缓存序列化结果

时间线

- 早在 2019 年的时候,社区有人反馈了一个问题[2]:在一个包含 5000 个节点的集群中,创建一个大型的 Endpoints 对象(5000 个 Pod,大小接近 1MB),kube-apiserver 可能会在 5 秒内完全过载;

- 接着社区定位了这个问题,并提出了 KEP 1152 less object serializations[3],通过避免为不同的 watcher 重复多次序列化相同的对象,降低 kube-apiserver 的负载和内存分配次数,此功能在 v1.17 中发布,在 5000 节点的测试结果,内存分配优化 ~15%,CPU 优化 ~5%,但这个优化仅对 Http 协议生效,对 WebSocket 不生效;

- 3 年后,也就是 2023 年,通过 Refactor apiserver endpoint transformers to more natively use Encoders #119801[4] 对序列化逻辑进行重构,统一使用 Encoder 接口进行序列化操作,早在 2019 年就已经创建对应的 issue 83898[5]。本次重构同时还解决了 2 提到的针对 WebSocket 不生效的问题,于 1.29 中发布;

所以如果你不是在以 WebSocket 形式(默认使用 Http Transfer-Encoding: chunked)使用 watch,那么升级到 1.17 之后理论上就可以了。

原理

图片

图片

新增了 CacheableObject 接口,同时在所有 Encoder 中支持对 CacheableObject 的支持,如下

// Identifier represents an identifier.

// Identitier of two different objects should be equal if and only if for every

// input the output they produce is exactly the same.

type Identifier string

type Encoder interface {

...

// Identifier returns an identifier of the encoder.

// Identifiers of two different encoders should be equal if and only if for every input

// object it will be encoded to the same representation by both of them.

Identifier() Identifier

}

// CacheableObject allows an object to cache its different serializations

// to avoid performing the same serialization multiple times.

type CacheableObject interface {

// CacheEncode writes an object to a stream. The <encode> function will

// be used in case of cache miss. The <encode> function takes ownership

// of the object.

// If CacheableObject is a wrapper, then deep-copy of the wrapped object

// should be passed to <encode> function.

// CacheEncode assumes that for two different calls with the same <id>,

// <encode> function will also be the same.

CacheEncode(id Identifier, encode func(Object, io.Writer) error, w io.Writer) error

// GetObject returns a deep-copy of an object to be encoded - the caller of

// GetObject() is the owner of returned object. The reason for making a copy

// is to avoid bugs, where caller modifies the object and forgets to copy it,

// thus modifying the object for everyone.

// The object returned by GetObject should be the same as the one that is supposed

// to be passed to <encode> function in CacheEncode method.

// If CacheableObject is a wrapper, the copy of wrapped object should be returned.

GetObject() Object

}

func (e *Encoder) Encode(obj Object, stream io.Writer) error {

if co, ok := obj.(CacheableObject); ok {

return co.CacheEncode(s.Identifier(), s.doEncode, stream)

}

return s.doEncode(obj, stream)

}

func (e *Encoder) doEncode(obj Object, stream io.Writer) error {

// Existing encoder logic.

}

// serializationResult captures a result of serialization.

type serializationResult struct {

// once should be used to ensure serialization is computed once.

once sync.Once

// raw is serialized object.

raw []byte

// err is error from serialization.

err error

}

// metaRuntimeInterface implements runtime.Object and

// metav1.Object interfaces.

type metaRuntimeInterface interface {

runtime.Object

metav1.Object

}

// cachingObject is an object that is able to cache its serializations

// so that each of those is computed exactly once.

//

// cachingObject implements the metav1.Object interface (accessors for

// all metadata fields). However, setters for all fields except from

// SelfLink (which is set lately in the path) are ignored.

type cachingObject struct {

lock sync.RWMutex

// Object for which serializations are cached.

object metaRuntimeInterface

// serializations is a cache containing object`s serializations.

// The value stored in atomic.Value is of type serializationsCache.

// The atomic.Value type is used to allow fast-path.

serializations atomic.Value

}关键字:

声明:我公司网站部分信息和资讯来自于网络,若涉及版权相关问题请致电(63937922)或在线提交留言告知,我们会第一时间屏蔽删除。

有价值

0% (0)

无价值

0% (10)

上一篇:中国SaaS,为何一地鸡毛!

发表评论请先登录后发表评论。愿您的每句评论,都能给大家的生活添色彩,带来共鸣,带来思索,带来快乐。

-

TOP